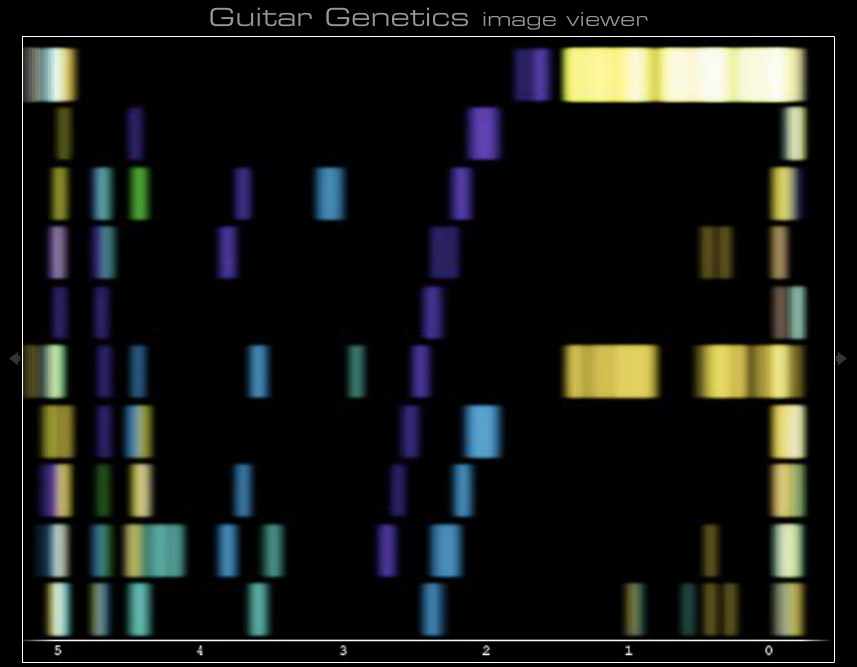

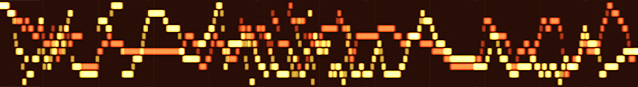

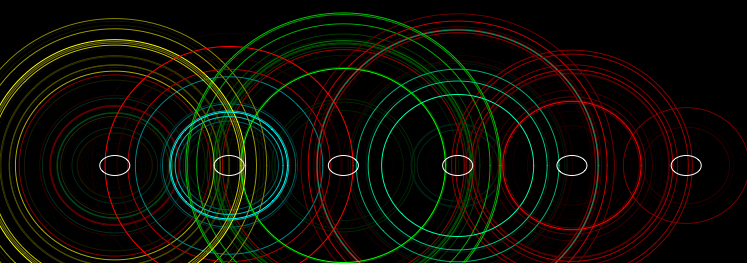

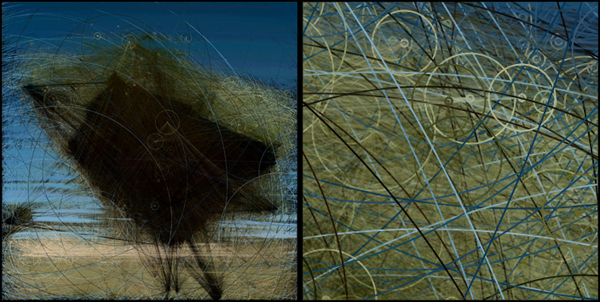

Guitar Genetics is the project I’ve been working on for the last six weeks or so combining an electric guitar and Processing. I feed an electric guitar though a headphone amplifier and into the line in on my laptop. (I also split it before the headphone amp to a read amp so as you play you can hear it). Using the Fast Fourier Transform from the Sonia class, I made a visualization that resembles DNA patterns. The color of each plot depends on which string on the guitar is plucked, and the vertical position is based on the fret. Since I am using pseudo-note detection, the program reads extra notes most of the time which produce more interesting visuals. To create a print, all the note information from a recording session is saved to a XML file, which is then run though my render engine I built, so I can produce high resolution prints. I have a more detailed case study below, or you can download it as a PDF.

There are more images on flickr.